Reflections from the SQLBits 2026 panel, Newport. The chair’s answers I never got to give.

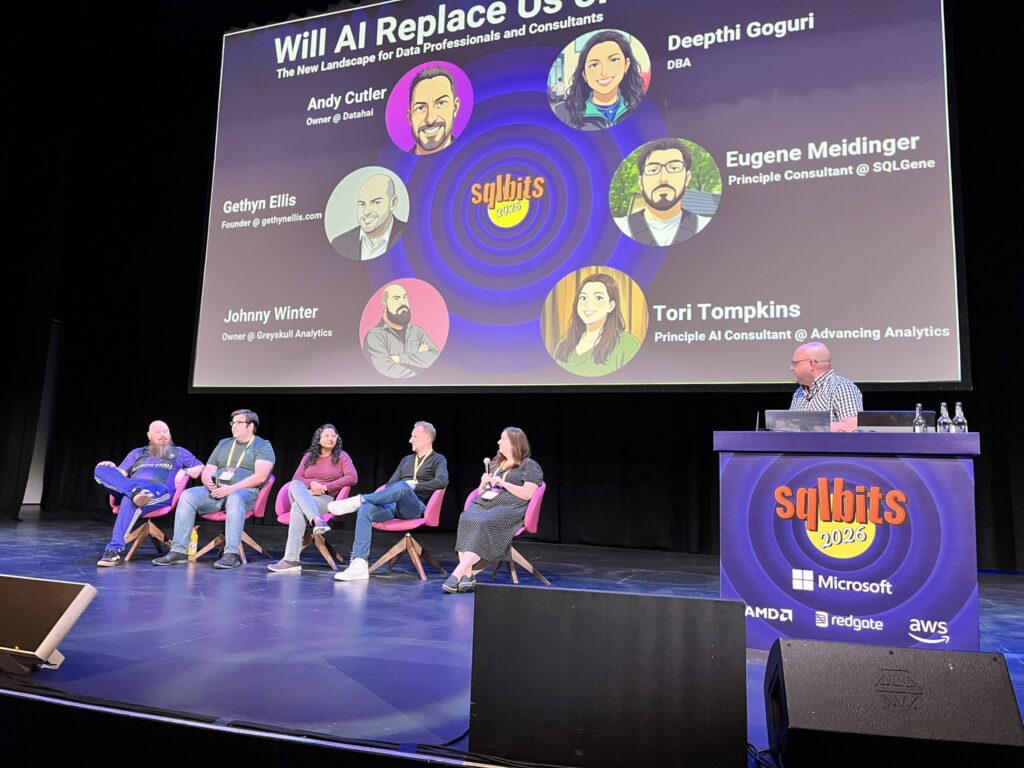

Last Friday at SQLBits in Newport, I chaired a panel called “Will AI Replace Us?” Five panellists, four themes, eight set questions and an hour on the clock. Andy Cutler, Eugene Meidinger, Deepthi Goguri, Tori Tompkins and Johnny Winter did the heavy lifting on the floor, and they did it very well.

The audience submitted dozens of questions through the QR code on the screen, and I made the chair’s mistake of being so determined to land the structure that I never got to share my own answers or answer many of the audience’s questions. So I’m going to create a few posts to address that

So this is the post-match version. Same four themes, same questions, but the chair gets a turn. Where I’ve drawn on points the panel made on the day, I’ve tried to flag it; where I disagree with my panellists, and on a couple of points I do, I’ve tried to be honest about that too.

My headline position, before I get into the details: this is a raise, not a replacement, for almost everyone willing to do the work to adapt. But “almost everyone” is doing a lot of the lifting in that sentence, and the people, organisations and roles that don’t adapt will be in real trouble.

The interesting question isn’t whether AI replaces us. It’s who decides to be raised by it, and who gets left behind because their employer or their industry didn’t invest in the transition.

This is a raise, not a replace, for almost everyone willing to do the work – but the work is real.

The Opportunity

Where is AI genuinely improving how we work?

On the panel, I framed this question deliberately: not transformation, not someday, what did AI do for you this week? I wanted to ground the conversation in something concrete, because there’s a particular kind of AI conversation that floats two feet above reality and never lands. The answers from the panel were grounded, and mine would be too.

Where it’s genuinely improving how I work, and where I see it improving how my clients work, falls into three buckets.

The first is the boring middle of knowledge work. Drafting first-cut documents from notes. Restructuring a long Teams transcript into a usable summary. Writing the change log for a Power BI release. Pulling the headlines out of a 40-page Microsoft licensing PDF so I can have a sensible conversation with a finance director.

None of that is glamorous, and none of it is the stuff that makes the conference keynote, but it is where the time savings are real, repeatable and measurable. A working week with these tools is meaningfully different from one without them. That’s the test.

The second is technical scaffolding. Generating the boilerplate for a new Fabric notebook. Writing the first draft of a stored procedure or a YAML pipeline. Suggesting the test cases I forgot.

The skill these tools have most decisively raised the floor on is “getting started” The cost of the blank page has dropped to near zero. That matters more than people realise, because for most of us the blank page wasn’t a five-minute problem. It was a half-day problem we hid under “research”.

The third, and this is where it gets interesting commercially, is in client conversations. I can interrogate a Microsoft licensing change, model a rough TCO, draft a discussion document, and prepare a workshop on a topic I’m only 70% of the way across, all in a fraction of the time I could have done a year ago.

That doesn’t replace the expertise; it amplifies it. It also raises the bar on what counts as competent advice, because if I can do it, my competition can too.

Where I’d push back gently on some of the audience submissions: a few questions assumed the gains have to be “put a number on it” quantifiable. They often aren’t, not in the sense of a clean ROI calculation.

The gains show up as compressed cycle time, fewer handoffs, better-prepared meetings, faster onboarding. Measuring that is hard. Refusing to act until you can measure it is a way of guaranteeing you fall behind.

Where are you seeing real productivity gains?

If I had to put a number on it, the honest answer is somewhere in the 15–40% range across the kinds of work I do, with significant variation by task.

Document drafting is at the top end. Strategic thinking, client positioning, and the actual hard parts of consulting work, understanding what’s really going on in an organisation, building trust, navigating politics, are barely affected.

AI doesn’t make me a better consultant. It makes me faster at the parts of the job that were always more craft than judgement.

The trap is mistaking productivity at the firm or industry level for productivity. Just because I can produce three drafts in the time I used to produce one doesn’t mean my clients want three drafts. The bottleneck has moved upstream, to whether the work was the right work in the first place. That’s a question AI doesn’t answer for you.

The Reality Check

Where is AI failing today? Quality, governance, security.

Plenty, and most of the failures I see in real client engagements aren’t the dramatic ones the press writes about. They’re quieter, more boring, and more expensive.

The first failure is treating AI output as expertise. The audience asked this directly. Everywhere. Boards reading AI-summarised reports as if they were analyst reports. Strategy documents written by tools that don’t know the company. RFP responses where the bid team has clearly let the model freewheel and nobody’s read it before submission.

The output looks competent, which is precisely the problem. Competence-shaped output without competence behind it is worse than no output at all, because it short-circuits the review process that would have caught a bad human draft.

The control I’d put in place, and this is what I push hard on with clients, is a review gate. AI output enters the workflow at the same point a junior’s draft would: as a starting point that a senior signs off. Not as a finished artefact.

The moment AI output skips the review step, you have a quality problem, regardless of how good the model is.

The second failure is non-determinism in places it shouldn’t be. One of the audience questions, and it’s a good one, was about how to handle the fact that AI prompts aren’t deterministic when you’re trying to do standardised things like documentation or code.

The honest answer is that you don’t make the model deterministic. You make the process around it deterministic. That means: a prompt library with versioning. An evaluation rubric that scores output against what “good” looks like. Test cases that the output has to pass. Human sign-off where the cost of error is high.

The model is the messy bit; the system around it is what makes the output reliable.

On hallucinations specifically, I treat them like off-by-one errors in code. You don’t prevent them by hoping. You prevent them by structure: ground the model in retrieval over your own documents where you can, narrow the task as much as possible, demand citations or sources, and assume any factual claim is wrong until verified.

The teams that get burned are the ones that ask an LLM what year a regulation went into effect and don’t check.

The third failure is governance lag. Most organisations have rolled out Copilot faster than they’ve rolled out the data classification, sensitivity labels, and access control to make Copilot safe.

The result is the same Copilot demo everyone has now seen at least once: “oh, it just surfaced a document I shouldn’t have been able to see.”

That isn’t a Copilot failure. It’s a data hygiene failure that Copilot has helpfully exposed. The fix is unsexy and slow: SharePoint permissions, sensitivity labels, retention. The work nobody wanted to do for ten years is the work that gates safe AI deployment.

Is AI changing how organisations value expertise?

Yes, but not in the direction the press would have you believe. The lazy take is that AI commoditises expertise and senior people are about to be undercut. The reality I see is that AI raises the floor on competent execution, which actually makes genuine expertise — judgement, taste, pattern recognition across many engagements — more valuable, not less.

What’s changing is what counts as expertise. A consultant whose differentiation was “I can produce a polished deck quickly” is in serious trouble.

A consultant whose differentiation is “I know what to put in the deck because I’ve seen this go wrong three times before” is in a stronger position than they were two years ago, because the polished deck is now a commodity and the judgment about what to put in it is not.

AI raises the floor on competent execution. That makes genuine expertise more valuable, not less.

Roles and Careers

Which roles or tasks are most likely to change?

The roles most exposed are those built around tasks that AI can plausibly automate end-to-end with limited oversight: routine document production, first-line support triage, basic data extraction, and simple reporting.

Whole roles? Probably not. Significant chunks of the working week for a lot of roles? Yes.

Where I’d be specific, because the audience asked for specificity, the roles I’d watch closely over the next three to five years are:

- Junior business analysts whose week is mostly requirements gathering, meeting notes, and stakeholder summaries. Not because the role disappears, but because the senior analyst can now do that work themselves at low marginal cost. The pyramid flattens.

- Mid-tier technical writers and documentation specialists. AI is genuinely good at this work, and the volume of human writing required will fall.

- First-line analytics roles – the “pull this number for me” request that used to occupy half a day for a junior analyst. Self-serve BI was supposed to fix this and largely didn’t. AI plus a semantic model probably will.

- Some parts of consulting itself. Specifically, the bits where consulting was selling reusable knowledge, the deck of slides someone could have looked up, the framework that’s in any business book. AI is excellent at retrieving and recombining publicly available knowledge. The bits of consulting that depend on specific, hard-won, in-the-room judgment are not going anywhere.

The junior pipeline question – the one I want to push back on hardest

Several audience members asked variations on this question: if AI takes entry-level roles, where will the medium and senior experts of the next decade come from?

This is the most important question of the whole panel, and I think the industry is currently getting it badly wrong.

Here’s the dynamic I see: firms are using AI to absorb the work juniors used to do. That’s rational at the level of any individual quarter. It’s catastrophic over the next decade.

Senior expertise isn’t born; it’s grown, and it’s grown by doing the work that AI now does in thirty seconds. If you stop hiring juniors, or you hire them and put them on “oversee the AI” duty, you’ve hollowed out the apprenticeship that made the senior people senior in the first place.

The honest answer is that this is an industry responsibility problem, not a market problem the market will solve.

Firms must invest in training pathways that look different from the ones they had. That probably means: juniors do more of the judgement work earlier, with AI doing the production work; structured exposure to client conversations from week one rather than year three; deliberate apprenticeship rather than learn-by-osmosis.

The firms that do this will own the senior talent of 2032. The firms that don’t will be paying enormous money to hire from the firms that did, or they’ll discover that the senior pipeline they assumed would refill itself simply hasn’t.

I think this is the single biggest commercial risk facing professional services right now, and almost nobody is treating it with the urgency it deserves.

Senior expertise isn’t born. It’s grown by doing the work AI now does in thirty seconds. Stop hiring juniors and you’ve hollowed out the pipeline.

What skills are becoming more important?

If I had to pick one skill — and the question on the day was framed as the one skill you’d make your own kids learn — I’d pick the ability to define a problem clearly.

That sounds bland until you realise how rare it is. Most of the bad AI output I see comes from a vague brief, not a bad model. The same is true of most bad consulting work, most bad code, and most bad meetings.

Justin Bird made exactly this point in the audience comments and I want to give him credit for it: refining a task clearly, the way you would in sprint planning, is becoming the foundational skill.

The discipline of writing a good prompt is the discipline of writing a good ticket, which is the discipline of thinking clearly about what you actually want. People who can do this will use AI well. People who can’t will produce confident-looking nonsense at scale.

The other skills I’d back: critical reading, because the volume of plausible-looking output is about to explode; domain expertise, because AI commoditises generic knowledge but rewards specific knowledge; and the ability to verify, because nothing the model produces is trustworthy by default.

Staying Relevant

What should data people and consultants do now?

The question on the day was deliberately framed as: don’t give me a strategy, give me a task. Something they can start on Monday. So I’ll do the same.

Pick one task in your working week that takes you more than an hour and is mostly text production. Documentation, status reports, meeting summaries, first-cut analysis, whatever.

Spend a focused two hours building a repeatable AI-assisted workflow for that task. Not a clever prompt, a workflow. A template, a prompt, a checking step, a sign-off step. Run it for two weeks. Measure the time saved. Then do the same for the next task.

This is unglamorous, and it is the entire game. The people who will be in a strong position in two years aren’t the people who read the most newsletters about AI. They’re the people who quietly redesigned twenty workflows and now operate at a level their peers can’t match.

For consultants specifically: start charging for outcomes, not hours. AI is shifting the industry from billing time to billing value, and the firms that adapt their commercial model will pull ahead of the firms that don’t.

If your offer is “we’ll spend 40 days doing a thing,” and AI lets you do that thing in 12, you have to either drop your fee or change what you’re selling.

The firms changing what they’re selling, to outcome, to access to expertise, to retained advisory, are the ones building durable businesses.

Adapting skills or positioning

The thirty-second answer I’d give my 25-year-old self is this: be the person who understands both sides of the bridge.

The technology and the business. The model and the use case. The Copilot rollout and the actual workflow it’s changing. Specialists on either side will get squeezed. People who genuinely span both will be in demand for as long as I can see ahead.

On positioning more broadly: stop selling the thing AI is going to commoditise.

If your differentiation is “I know how to write SQL” or “I can build a Power BI report,” you’re selling production work that’s about to get a lot cheaper.

If your differentiation is “I know what to measure and why,” “I know how to land a Copilot rollout in a 5,000-person organisation,” “I know how to translate between business and IT,” you’re selling judgement, and judgement holds its value.

Replace, or raise

The deck closed with two words on the final slide: Replace. Raise. You decide which one it is for you. I meant it as a deliberately open prompt for the audience, but if you want my answer:

It’s a raise for almost everyone willing to do the work to adapt.

It’s a replace for the parts of jobs that were always more production than judgement, and for the people, firms and industries that don’t invest in the transition.

The dividing line isn’t about your role title or your technical aptitude. It’s about whether you, and the organisation around you, are willing to redesign how work gets done rather than bolting AI onto the side and hoping for the best.

The questions the audience submitted and the answers the panel gave were sharper than any think-piece I’ve read on this topic. That’s why these conversations matter, and why I’m glad SQLBits gives them room.

To the panellists — Andy, Eugene, Deepthi, Tori, Johnny — thank you again.

To everyone in the room who submitted a question: this post is your answer, and I’d love to hear which bits you disagree with.

Replace or raise. You decide.

Two things if this resonated:

→ Try the Prompt Frameworks Toolkit — interactive, in your browser, free.

→ Download The AI Adoption Playbook — the five frameworks, fully written up.

Both built for the people who’d rather redesign twenty workflows than read another newsletter about AI.

PakarPBN

A Private Blog Network (PBN) is a collection of websites that are controlled by a single individual or organization and used primarily to build backlinks to a “money site” in order to influence its ranking in search engines such as Google. The core idea behind a PBN is based on the importance of backlinks in Google’s ranking algorithm. Since Google views backlinks as signals of authority and trust, some website owners attempt to artificially create these signals through a controlled network of sites.

In a typical PBN setup, the owner acquires expired or aged domains that already have existing authority, backlinks, and history. These domains are rebuilt with new content and hosted separately, often using different IP addresses, hosting providers, themes, and ownership details to make them appear unrelated. Within the content published on these sites, links are strategically placed that point to the main website the owner wants to rank higher. By doing this, the owner attempts to pass link equity (also known as “link juice”) from the PBN sites to the target website.

The purpose of a PBN is to give the impression that the target website is naturally earning links from multiple independent sources. If done effectively, this can temporarily improve keyword rankings, increase organic visibility, and drive more traffic from search results.